Tracking What Matters, Together

In my last post, I reflected on Natalie Wexler’s reminder that reading comprehension isn’t a generic skill—it’s deeply tied to knowledge, vocabulary, and experience with language. This guiding idea has helped shape our work in designing, piloting, and refining a prototype to assess comprehension across three key areas: Characters, Literal Information, and Inferential Understanding.

This week, the real work begins to support our Fourth-Grade students with reading accommodations as the base classrooms begin their novel study.

Deepening the Prototype

Our focus shifted to Chapters 1–2 and 3–4 of Grace Lin’s Starry River of the Sky. We began to design, pilot, and revise our assessments, with the same goals in mind:

- To understand what students comprehend

- To gather evidence that helps us teach

- To build a system that supports student growth over time

We began with a draft: five multiple-choice questions per category for Chapters 1–2. Format and language mattered. We asked:

- Is each question accessible?

- Does it reflect the reading? The thinking we want to see?

- Are the distractors (incorrect answers) plausible but not confusing?

As a team—Learning Team specialists, Marsha, Jayna, and I—we focused especially on refining the Inference Questions. After thoughtful discussion, we edited the questions and updated our prototype, along with the scoring metrics for Looker Studio. These small adjustments are signs of something bigger: We’re not just assessing—we’re learning what assessing requires.

Real-Time Data and Revision

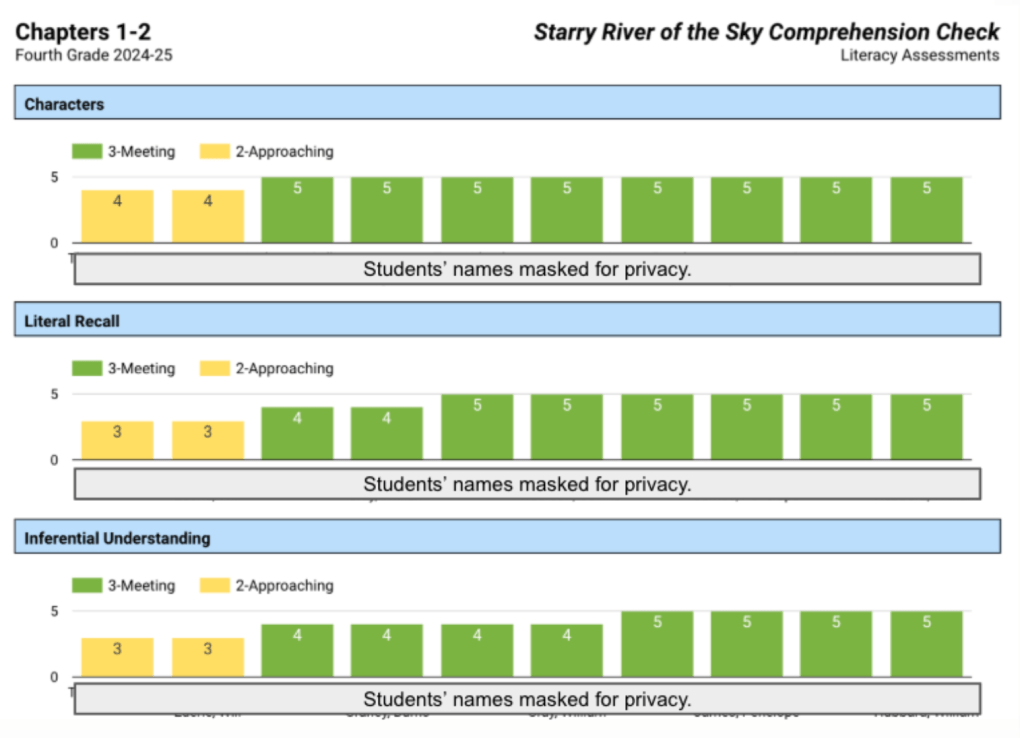

With students taking the assessment, we could visualize the results right away. The graphs in Looker Studio helped us see patterns—where students were strong, where they needed support, and how our design held up under real classroom conditions.

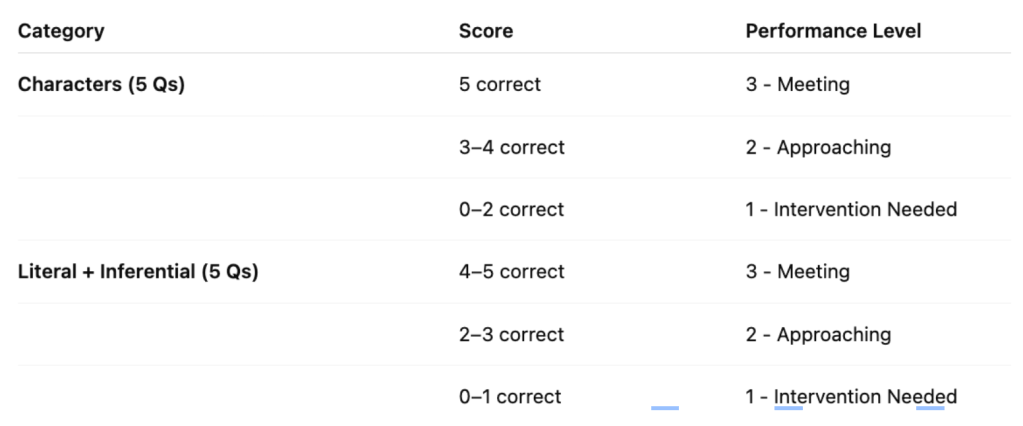

One key insight: our original metric for the Characters category wasn’t giving us a helpful signal.

So we adjusted:

These new benchmarks give us more accurate data—data that’s more usable for instructional planning, Tier II support, and student conversations.

Thinking Beyond Questions

We also made a move toward supporting students in navigating the text independently: printing pronunciation guides from Bookworms and adding them to students’ books. It’s a small, strategic nudge toward accessibility, confidence, and autonomy. A reminder that the mechanics matter when we want comprehension to shine.

What We’re Wondering Now

As we continue collecting data, I find myself returning to a key question:

Is it more useful to track growth by chapter… or by date?

Chapter-based analysis lets us see which parts of the book or types of questions students understand best. Date-based analysis lets us see whether students are making progress over time, regardless of chapter.

We might need both views.

We’re not just testing; we’re experimenting. We’re testing our assumptions, our questions, our metrics, and our materials. The goal isn’t perfection. The goal is clarity, insight, and next steps.

The Work Ahead

Comprehension isn’t a checkbox. It’s a window.

Our job is to open that window wide enough to see what students know and how they’re thinking.

Through this prototype work, we’re building a shared language for understanding a text and shared tools for measuring that understanding in practical, flexible, and formative ways.

We’re not done yet. But we’re better today than we were a week ago.

And next week, we’ll learn more still.

Stay tuned.

Jill